AI and applicant tracking systems are common practices in business, screening out hundreds of candidates automatically. A 2019 study from Oracle found that 36% of HR professionals expected AI usage to be high or very high for talent acquisition moving forward. However, this technology often leaves applicants from underrepresented groups in a lurch.

“A lot of folks from diverse backgrounds might not have a traditional resume, but they might have the right skill set [for an open role],” says Phil Strazzulla of Select Software Reviews, which evaluates human resource software. An ATS searching for keywords may cut underrepresented candidates, even if they are capable of doing the job.

However, as more companies put an emphasis on building diverse workforces, new software is coming to the forefront. Instead of slashing candidates, these programs are designed to keep hiring equitable. See four popular software solutions that reduce hiring bias:

1. Humanly

- What it is: Typically, recruiters take weeks to scan applications and set up initial meetings. The Humanly app aims to screen candidates and schedule interviews in less than three minutes. “Our focus…is around inclusion,” says Bennet Sung, head of marketing at Humanly. “The goal is to be able to talk to every candidate that applies.”

- How it works: Candidates answer interview questions in an AI-powered chat. If the AI determines that the candidate is fit for a role, the system automatically schedules a follow-up with a recruiter. An analytics-oriented tool helps recruiters ask consistent questions to all candidates, preventing skewed questions or unconscious bias.

- Who’s using it: Humanly software is aimed at companies looking to fill a large number of open positions at a time.

- How much it costs: Price is determined by the number of jobs posted. On average, most contracts range from $1,500 to $3,000 per month, Sung says. HR teams can also pay for additional features, including chatbots and bonus analytics.

2. Paradox

- What it is: Paradox turns the job application process into a mobile conversation. Like Siri or Alexa, an AI-powered assistant named Olivia makes it easier to schedule an interview, ask about benefits and discuss job requirements — all via text message. Olivia can also hold conversations in multiple languages.

- How it works: Sending a text message to a specific code will kickstart a conversation with Olivia, who then asks basic questions. For example, Olivia can ask whether candidates are legally qualified to work in the U.S. When it comes to DEI, Olivia can bridge barriers and hold conversations in 30 languages. According to chief marketing officer Josh Zywien, the AI system does not see race, gender, ethnicity or background.

- Who’s using it: Paradox has been used by companies of all sizes — at both the executive and personnel levels. Companies in retail, healthcare, fast food and financial services have used Paradox.

- How much it costs: Pricing varies by client.

3. Fetcher

- What it is: Passively picking through applications submitted via job boards can lead to homogeneity. Meanwhile, diverse candidates are often found through proactive sourcing. Fetcher helps recruiters improve their DEI practices by filling their pipeline with candidates of all backgrounds.

- How it works: Recruiters review prospective candidates and indicate whether they are a fit in Fetcher’s interface. Then, Fetcher’s AI finds patterns that connect qualified applicants and begins sending candidates to users. Recruiters can then contact matches with personalized emails. “Using automation removes some unconscious bias, allowing candidates to be contacted that might have been filtered out by recruiters previously,” says Melissa Roer, vice president of marketing at Fetcher. “Fetcher also includes diversity analytics, so recruiters can easily see if they’re over- or under-indexing on certain genders, ethnicities, education levels, etc. in order to reevaluate their hiring process and ensure a fair candidate pool.”

- Who’s using it: Fetcher serves internal recruiting teams at companies that make multiple, full-time hires throughout the year.

- How much it costs: Contracts vary based on number of users but start at $8,000 annually. This includes sourcing, email outreach, analytics and more.

4. Bryq

- What it is: Bryq simulates real-world business scenarios to see how candidates would act in a role. Recruiters can then make hiring decisions based on an applicant’s actions, instead of relying solely on resume bullets. According to CEO Markellos Diorinos, Bryq’s AI technology doesn’t consider race, gender or age when building assessments, making the process more fair.

- How it works: Candidates are presented with real-world scenarios and must pick from a set of responses. Questions are designed to measure an applicant’s soft skills and personality. The back-end then analyzes answers given in the business simulation. Bryq generates actionable insights by explaining to recruiters how an applicant matches their ideal candidate profile. The AI system then suggests a shortlist of candidates to move forward.

- Who’s using it: Large multinational companies, such as Ernst & Young, use Bryq — all the way down to small start-ups or individual companies who want to grow their team.

- How much it costs: Plans start as low as $199 per month for a company that only hires a few employees annually. For large multinational organizations, contracts can be upwards of $100,000 per year.

Best Practices for Using AI in Hiring

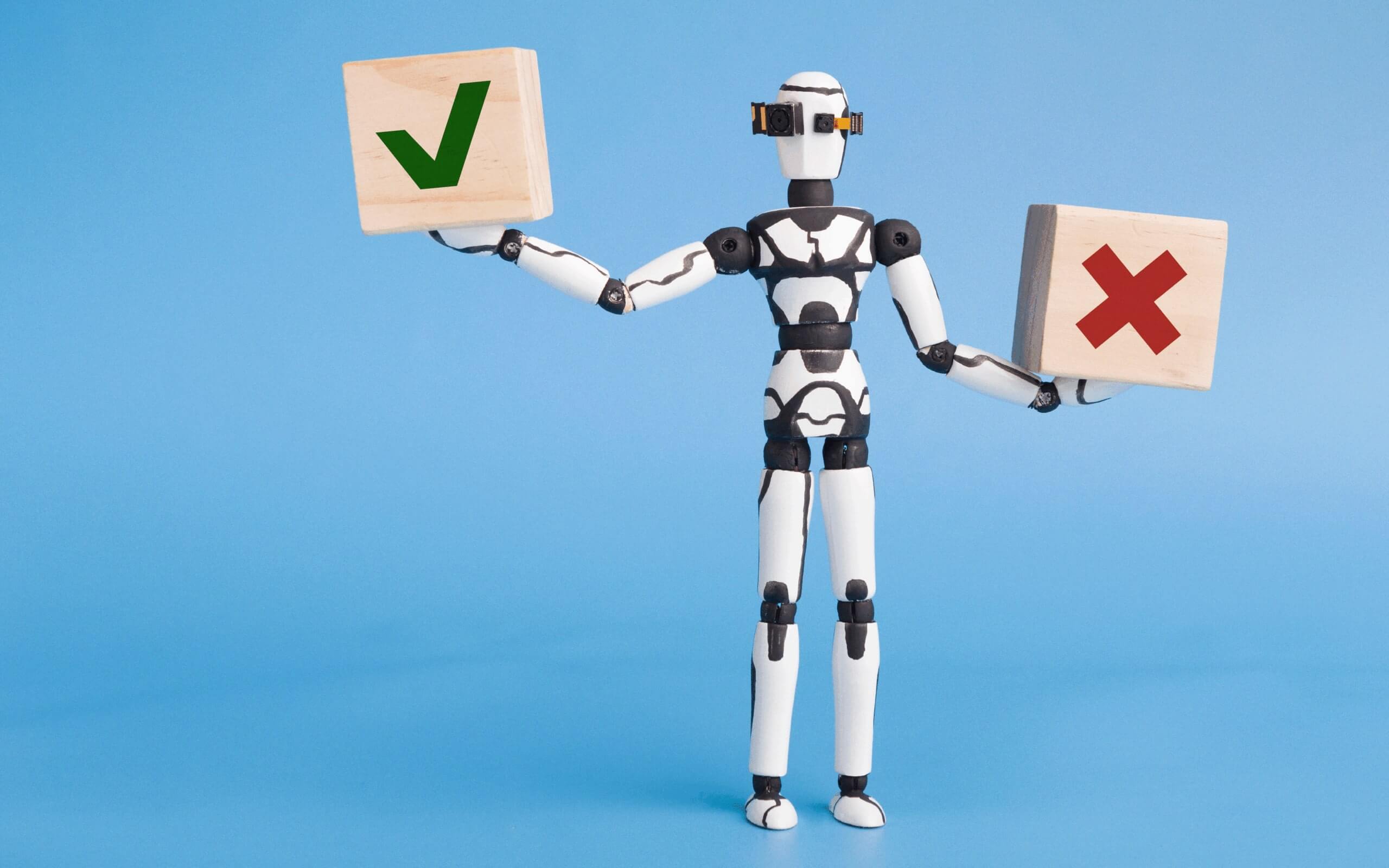

While AI tools can help teams connect with underrepresented talent, software solutions alone cannot guarantee diversity. Analytics from technology should be paired with insights from recruiters at the company, Strazzulla says. Be aware of these common pitfalls:

- Over-automation. It’s important to avoid over-automating the hiring process. Recruiters should also make an effort to include applicants with transferable skills who may not look perfect on paper.

- Focusing only on hiring, but ignoring the experiences of diverse employees. Even with tools that give DEI a boost, is your company actually a good place to work as a black professional? As a woman? As a person with disabilities? Consider the broader picture. Track these metrics to keep a pulse on the experiences of employees from underrepresented groups.

- Lack of oversight. Helpful software programs need human oversight. Remember, at the end of the day, real people make the final decisions. “The AI is good at understanding you want to have people in X geography with Y skills,” says Strazzulla. “But it’s really down to the human to say, ‘We’d like to hire someone with this background or that background.’”